Memory-augmented Transformers Report

: Analysis on the Market, Trends, and TechnologiesThe field of Memory-Augmented Transformers has moved from proof-of-concept research to measurable market momentum, driven by simultaneous hardware and software innovations that extend usable context to 262K tokens while reducing hallucination and operational cost arXiv – Memory-Augmented Transformers: A Systematic Review. Patent and IP activity confirms industrial commitment, with reports noting a 73% rise in memory-related patent filings in recent years. These shifts create two linked commercial opportunities: (1) high-efficiency silicon and memory fabrics that compress the memory/compute gap, and (2) structured, persistent memory software that converts transient LLM outputs into auditable, multi-session knowledge—together forming a market vector that investors are already funding at scale.

We last updated this report 98 days ago. Tell us if you find something’s not quite right!

Topic Dominance Index of Memory-augmented Transformers

To gauge the impact of Memory-augmented Transformers, the Topic Dominance Index integrates time series data from three key sources: published articles, number of newly founded startups in the sector, and global search popularity.

Key Activities and Applications

- Long-context reasoning for domain workflows. Extending context windows into the hundreds of thousands of tokens enables single-pass legal reviews, full-paper scientific synthesis, and entire codebase comprehension without manual chunking; implementations reported at scale are now shipping in research and early commercial pilots.

- Retrieval and structured persistence for agents. Memory layers move beyond raw vectors to profile, episodic, and task-scoped storage that supports continuity across sessions, lowering hallucination and enabling agentic decision flows.

- Continual test-time learning and experience capture. Systems write compact summaries or utility-scored experiences at inference time so models “remember” useful interactions without full retraining, improving task completion in agent benchmarks by reported margins.

- Edge and on-device memory solutions. Non-volatile and analog memory approaches aim to enable persistent, low-power inference and local learning on sensors and robots where HBM/DRAM costs prohibit large context models Edge-AI Vision – When DRAM Becomes the Bottleneck.

- Memory fabric and disaggregation for data centers. CXL and similar fabrics disaggregate host memory to create scalable pools for inference and KV caches, reducing GPU HBM pressure and enabling “effectively larger” models at lower per-query cost.

Emergent Trends and Core Insights

- Hardware/software co-design dominates product outcomes. Companies that pair in-memory compute or high-density on-chip memory with memory-aware model architectures report materially better cost/performance than those using only model changes d-Matrix.

- Memory management is moving from ad-hoc to structural. The most practical gains come from organizing memory around profiles and episodes rather than flat embedding stores; this reduces retrieval noise and improves relevance for downstream reasoning tasks GrandViewResearch – Retrieval Augmented Generation Market.

- Adaptive forgetting and surprise-driven allocation are becoming operational norms. Systems that prune or compress rarely used entries show consistent compute savings without performance loss.

- Standardization pressure on memory fabric and APIs. Vendors building CXL IP and composable memory links are positioned to capture platform value if an industry standard for memory pooling emerges.

- Patent and investment acceleration signal commercialization window. Patent filing growth and concentrated funding rounds indicate that memory augmentation is progressing toward deployable silicon and enterprise software stacks ChannelLife – AI set for post-transformer shift to memory-led tools.

Technologies and Methodologies

- Analog Compute-in-Memory (CIM) and RRAM / MRAM integration. These approaches embed matrix operations in memory arrays to cut energy per MAC and shrink data movement, enabling large context at lower power PIMIC.ai.

- CXL-based disaggregated memory fabrics. CXL controllers and switches create low-latency pools that let inference systems oversubscribe local HBM and centralize cold state affordably Panmnesia.

- Profile-centric memory models and token-aware summarization. Tiered storage (hot KV caches, warm embeddings, cold archives) combined with token-aware summarizers reduce storage and retrieval costs while preserving relevant context.

- Test-Time Training and small mutable model slices. Controlled, on-the-fly adaptation uses a tiny portion of model parameters for short-term learning, keeping inference costs bounded while improving recall of fresh facts.

- Memory governance and auditable persistence. Encryption, tenant scoping, and tokenized access control are emerging as mandatory features for enterprise adoption of persistent memory layers.

Memory-augmented Transformers Funding

A total of 38 Memory-augmented Transformers companies have received funding.

Overall, Memory-augmented Transformers companies have raised $919.4M.

Companies within the Memory-augmented Transformers domain have secured capital from 126 funding rounds.

The chart shows the funding trendline of Memory-augmented Transformers companies over the last 5 years

Memory-augmented Transformers Companies

- MemChain AI — MemChain AI builds a structured, persistent memory platform that scopes memory to global, tenant, and agent levels and adds audit trails and token-based access controls. The product targets agent builders who need secure, multi-tenant memory that supports summarization and graph search. Its emphasis on enterprise deployment models and hybrid hosting addresses regulatory and latency constraints.

- Llongterm — Llongterm offers an API-first middleware that adds long-term memory to any LLM, focused on compact, developer-friendly integrations for session continuity. The company positions memory as a developer primitive, reducing engineering effort to add persistent personalization. Its small team and API focus make it an attractive integration partner for specialized SaaS.

- Flumes — Flumes provides a unified memory API optimized for token-aware summarization, tiered storage, and PII controls so agents can scale memory cost-effectively without managing vector stores. The platform includes analytics for memory usage and automatic pruning rules that cut storage waste. Flumes targets early enterprise adopters seeking operational memory without heavy infrastructure changes.

- Memobase — Memobase implements user profile–based memory that stores only high-value attributes and session summaries instead of full document dumps, improving latency and privacy. The team offers an open-source core plus managed options for rapid prototyping and cost comparisons against classic RAG. Their benchmarking shows lower inference costs and faster response times for personalized apps.

- TORmem Inc — TORmem focuses on disaggregated memory for data centers, promoting a single memory pool for multiple servers to reduce duplication and enable cheaper large-context inference. TORmem’s approach addresses the HBM bottleneck by enabling high-speed shared memory fabrics that decouple working sets from individual GPUs. The company targets cloud and hyperscaler partners looking to extend model context without multiplying accelerator costs.

Enhance your understanding of market leadership and innovation patterns in your business domain.

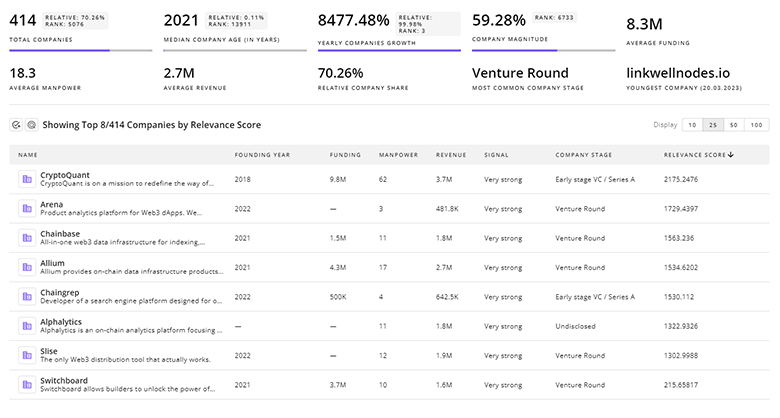

172 Memory-augmented Transformers Companies

Discover Memory-augmented Transformers Companies, their Funding, Manpower, Revenues, Stages, and much more

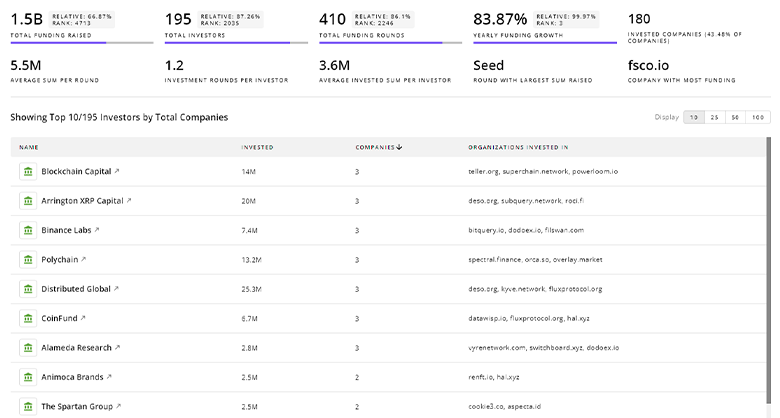

Memory-augmented Transformers Investors

TrendFeedr’s Investors tool offers comprehensive insights into 203 Memory-augmented Transformers investors by examining funding patterns and investment trends. This enables you to strategize effectively and identify opportunities in the Memory-augmented Transformers sector.

203 Memory-augmented Transformers Investors

Discover Memory-augmented Transformers Investors, Funding Rounds, Invested Amounts, and Funding Growth

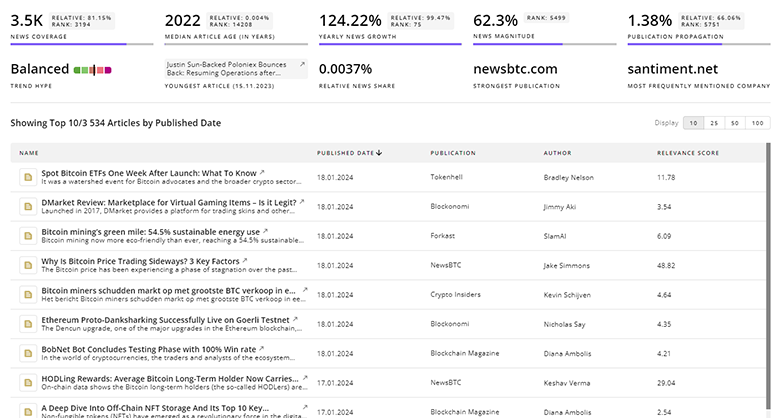

Memory-augmented Transformers News

TrendFeedr’s News feature provides access to 556 Memory-augmented Transformers articles. This extensive database covers both historical and recent developments, enabling innovators and leaders to stay informed.

556 Memory-augmented Transformers News Articles

Discover Latest Memory-augmented Transformers Articles, News Magnitude, Publication Propagation, Yearly Growth, and Strongest Publications

Executive Summary

Memory-augmented transformers are converging on two complementary value propositions: persistent, structured memory that improves model usefulness for multi-session, agentic workflows, and hardware-level memory innovations that lower the cost of maintaining very large contexts. Market indicators—token capacities reported at 262K, patent growth, and concentrated funding—show that the sector has left pure research and is now subject to product and standards competition. Strategic winners will either control key pieces of the memory fabric (CXL IP, persistent NVM, or analog CIM) or own the memory abstraction that enterprises adopt for auditable, privacy-safe agent deployments. Organizations evaluating investment or product bets should align technical roadmaps to both dimensions: secure a path to efficient persistent storage while building memory semantics that deliver measurable reductions in hallucination, latency, and total cost of ownership.

We value collaboration with industry professionals to offer even better insights. Interested in contributing? Get in touch!