High Performance Computing Report

: Analysis on the Market, Trends, and TechnologiesThe high-performance computing (HPC) sector is experiencing significant growth driven by innovations in computational techniques and a growing presence across industries. With 1.8K companies engaged in HPC and a substantial market presence in IT services, and software development, the sector continues to evolve. Key industry trends include the integration of big data and data storage, parallel computing, and cloud rendering. Notable emerging trends further shaping the industry include exascale systems and the convergence of AI and machine learning with HPC. The sector’s impressive growth is evidenced by a 51.51% increase in news coverage and a 32.5% increase in topic share over the past five years. Companies are developing solutions for cloud computing, data disaster recovery, and endpoint security, among other applications. The investment landscape is robust, with significant funding growth and contributions from major investors. This indicates a strong trajectory for future advancements and innovations in HPC.

We updated this report 623 days ago. Missing information? Contact us to add your insights.

Topic Dominance Index of High Performance Computing

The Topic Dominance Index combines the distribution of news articles that mention High Performance Computing, the timeline of newly founded companies working within this sector, and the share of voice within the global search data

Key Activities and Applications

- System Design & Architecture: Development and manufacturing of servers, workstations, storage systems, and thin clients.

- Software Development: Provision of operating systems and computer application services for cloud computing and data disaster recovery.

- Performance Optimization: Algorithm development and performance tuning to enable massively parallel computing and maximize performance.

- Networking: High-speed interconnects ensure quick data transfer between nodes and real-time data processing.

- Industry Applications: Modeling and analysis for scientific research, prototyping and simulation for engineering, and risk analysis for the financial sector.

- Energy Efficiency: Leveraging energy-efficient architecture and renewable energy to the carbon footprint.

Emergent Trends and Core Insights

- Exascale Systems: Systems that perform at least one exaFLOP (a billion billion calculations per second), which represents a significant leap in computational power.

- Cloud-based HPC: Growth in cloud rendering services, driven by demand for high-efficiency computational technologies.

- AI Integration: Combining AI and HPC to solve complex problems as well as developing specialized hardware like GPUs and TPUs to accelerate AI and machine learning workloads.

- Distributed Computing Models: Moving computational tasks closer to data sources to reduce latency and bandwidth usage.

- Heterogeneous Computing: Using diverse architectures, like CPUs, GPUs, and FPGAs, to optimize performance and develop customizable hardware.

- Automation & Orchestration: Automating resource management and workload orchestration in HPC systems to optimize performance.

Technologies and Methodologies

- Photonics: Use of photonics for scalable, high-bandwidth, and energy-sustainable AI, ML, and simulation hardware.

- Advanced Processors: Multi-core CPUs, GPUs, and other processing units to enable parallel and highly efficient processing of AI and ML workloads.

- AI-integrated HPC: Provision of AI cloud computing services to accelerate artificial intelligence computing in data centers.

- In-memory Computing: Storing data in RAM to improve processing speed and response, especially in business applications.

- Quantum Computing: Developing quantum processors to address specific issues and utilizing classical hardware to simulate quantum systems for research.

- Energy-efficient Computing: Using dynamic voltage and frequency scaling (DVFS) and power-aware scheduling to optimize power usage and energy efficiency of HPC systems.

High Performance Computing Funding

A total of 434 High Performance Computing companies have received funding.

Overall, High Performance Computing companies have raised $20.4B.

Companies within the High Performance Computing domain have secured capital from 1.1K funding rounds.

The chart shows the funding trendline of High Performance Computing companies over the last 5 years

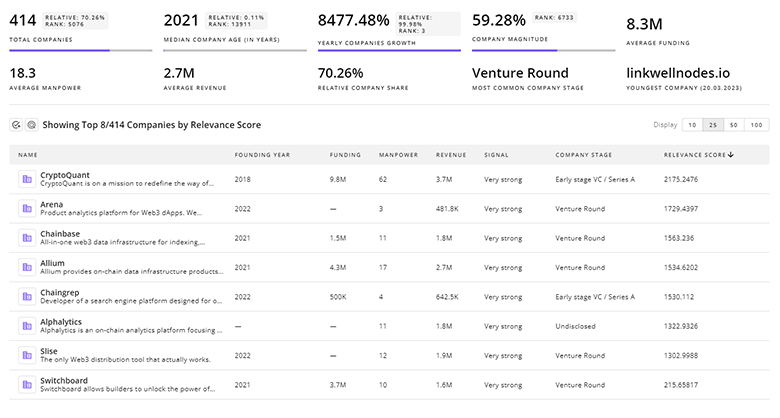

High Performance Computing Companies

Gain a competitive edge with access to 1.8K High Performance Computing companies.

1.8K High Performance Computing Companies

Discover High Performance Computing Companies, their Funding, Manpower, Revenues, Stages, and much more

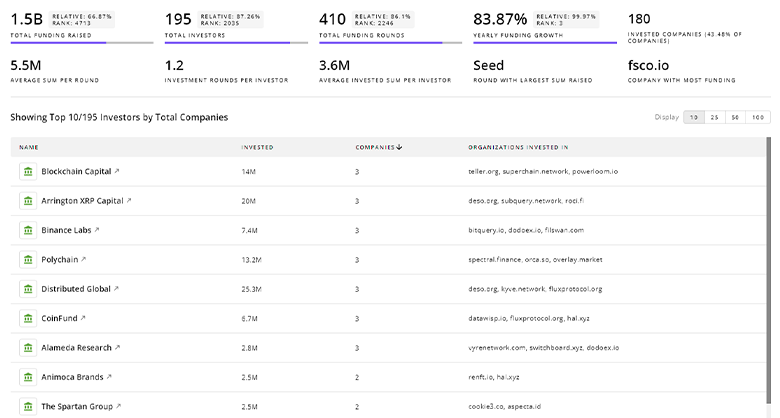

High Performance Computing Investors

Leverage TrendFeedr’s sophisticated investment intelligence into 488 High Performance Computing investors. It covers funding rounds, investor activity, and key financial metrics in High Performance Computing. investors tool is ideal for business strategists and investment experts as it offers crucial insights needed to seize investment opportunities.

488 High Performance Computing Investors

Discover High Performance Computing Investors, Funding Rounds, Invested Amounts, and Funding Growth

High Performance Computing News

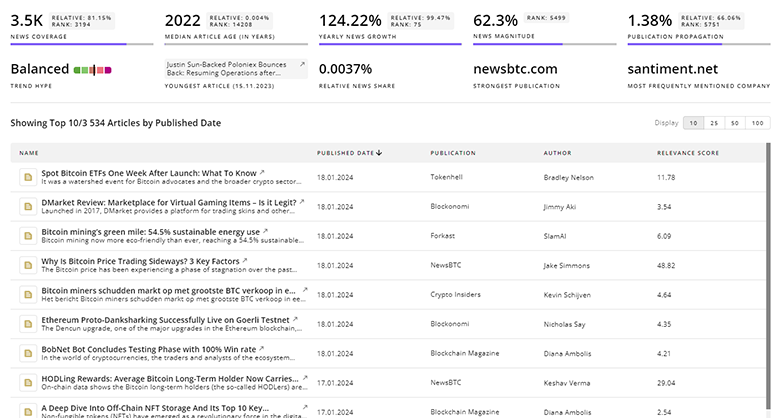

TrendFeedr’s News feature provides a historical overview and current momentum of High Performance Computing by analyzing 21.1K news articles. This tool allows market analysts and strategists to align with latest market developments.

21.1K High Performance Computing News Articles

Discover Latest High Performance Computing Articles, News Magnitude, Publication Propagation, Yearly Growth, and Strongest Publications

Executive Summary

As advancements in AI, quantum computing, and energy-efficient technologies converge with the demands of data-intensive industries, the future of HPC promises greater breakthroughs and applications. The sustained growth and investment in this sector underscore its critical role in solving complex global challenges, from scientific research to financial modeling and beyond. For those looking to stay ahead in this rapidly evolving field, continuous engagement with the latest trends and innovations is essential. Whether you are an industry professional, researcher, or investor, staying informed and connected will ensure that you will lead this growing sector.

Interested in contributing your expertise on trends and tech? We’d love to hear from you.